Can AI Replace Doctors? The Truth About Medical AI in 2026

AI will NOT fully replace doctors in 2026 or even in the next decade. What it WILL replace are specific tasks: image reading, paperwork, triage, coding support, and parts of clinical decision support. The winning future is a hybrid model: AI handles the heavy data work, while doctors handle judgment, ethics, trust, and responsibility. Doctors who learn AI tools will become faster, more valuable, and more in demand.

Why This Question Is Exploding in 2026

The fear that “AI will replace doctors” is not just social media hype. It is fueled by real changes happening inside hospitals and clinics:

• AI diagnostic models are matching specialist-level performance in narrow tasks (like image classification).

• Hospitals are under pressure to reduce costs, increase throughput, and reduce errors.

• Doctor shortages in many regions force systems to use automation wherever possible.

• Administrative burden is breaking clinicians: documentation, coding, prior authorization, and compliance work.

So when people ask “Can AI replace doctors?”, what they really mean is:

Will AI replace doctors’ income, status, or control in healthcare? Will hospitals reduce headcount? Will patients trust machines?

To answer honestly, we must separate: (1) what AI can do well, (2) what medicine requires, (3) what law and ethics allow, and (4) what patients will accept.

What Counts as Medical AI?

Medical AI is a set of software and hardware systems that use machine learning (ML), deep learning, natural language processing (NLP), and predictive analytics to assist clinical and operational healthcare work.

In practical terms, medical AI shows up as:

• Imaging AI (radiology, pathology, dermatology)

• Symptom checkers / virtual triage assistants

• Clinical decision support (drug interactions, risk scoring)

• Documentation automation (speech-to-text + note generation)

• Workflow and scheduling optimization

• Fraud detection and billing optimization

• Remote monitoring + anomaly detection

• Drug discovery and clinical trial optimization

Important: Most “AI in medicine” today is not a robot doctor. It’s software embedded in hospital systems that supports clinicians and admin teams.

AI vs Doctors: The Core Comparison

Use this table as a reality check before you believe viral headlines.

| Category | AI Systems | Human Doctors |

| Speed at pattern analysis | Very high (milliseconds) | Limited by time and attention |

| Consistency | High if data is stable | Varies by fatigue/experience |

| Empathy and trust | No true empathy | Core strength |

| Contextual reasoning | Weak outside training patterns | Strong, flexible judgment |

| Ethics and values | Rule-based | Human moral reasoning |

| Accountability | Shared/unclear (vendor + hospital + doctor) | Clear professional responsibility |

| Learning new edge cases | Needs data + retraining | Learns from experience quickly |

| Communication | Can explain superficially | Can persuade, reassure, negotiate |

Where AI Can Replace Parts of Doctors’ Work

Here’s the truth: AI will not replace “doctorhood” as a whole, but it can replace tasks—especially tasks that are repetitive, data-heavy, or rules-driven. These are the areas where AI already produces measurable ROI for hospitals and clinics.

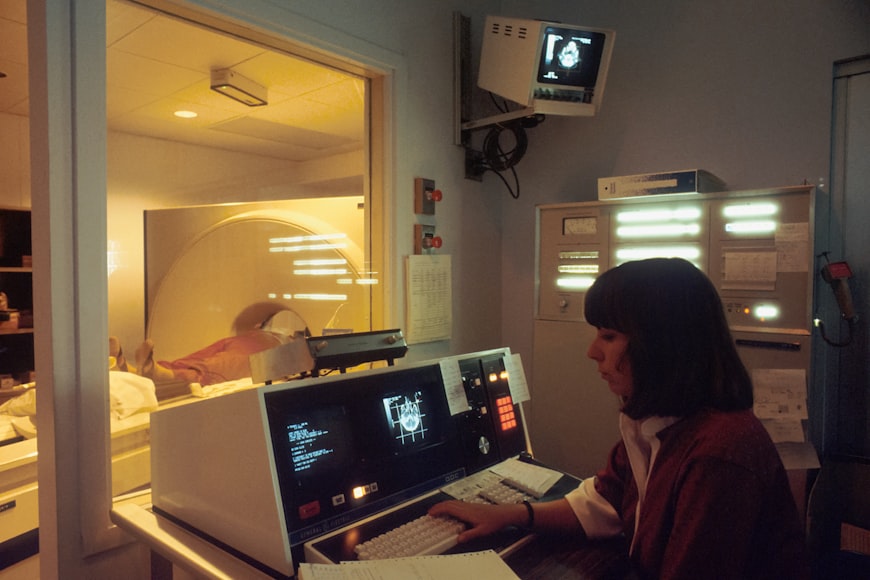

1) Imaging and Visual Pattern Recognition

Radiology, pathology, and dermatology are high-impact areas because many workflows are image-centered. AI can:

• Flag suspected tumors, fractures, strokes, bleeds, and pneumonia patterns

• Prioritize urgent cases (triage) to reduce time-to-treatment

• Reduce missed findings in high-volume departments

• Support second-read workflows and quality assurance

But here’s the limitation: imaging is not the full diagnosis. The final clinical decision still needs patient history, labs, physical exam, comorbidities, and context.

2) Documentation and Medical Scribing Automation

One of the biggest “replacement” impacts is paperwork. AI can reduce the time doctors spend typing by:

• Transcribing consultations

• Generating structured notes (SOAP, discharge summaries)

• Suggesting coding and billing phrases

• Creating patient instructions and follow-up summaries

This doesn’t replace the doctor. It replaces the time-wasting parts of doctor work, which often improves satisfaction and reduces burnout. That is why clinics pay for these tools.

3) Triage and Symptom Checking

AI triage tools can ask standardized questions, assess risk, and route patients to:

• self-care guidance,

• a nurse line,

• an urgent appointment,

• emergency services.

For high-volume systems, this reduces overload. But it does not equal diagnosis. Triage is about prioritizing, not concluding.

4) Risk Prediction and Preventive Care

Predictive models can estimate the probability of deterioration, readmission, and chronic disease progression. This is valuable for population health and insurance systems.

Example outcomes AI can improve:

• identifying high-risk patients early,

• optimizing follow-up schedules,

• reducing avoidable admissions,

• improving medication adherence interventions.

5) Billing, Coding, and Revenue Cycle Optimization

This is the “silent replacement” area that many doctors ignore. AI can optimize revenue and reduce denials by:

• validating codes,

• detecting missing documentation,

• flagging claim risks,

• identifying payer-specific rejection patterns,

• automating prior authorization logic.

Where Doctors Stay Irreplaceable

Even if AI becomes perfect at many narrow tasks, doctors remain irreplaceable in areas that require meaning, trust, and accountability.

If you want a simple rule: The more human the situation, the less replaceable the doctor.

1) High-Stakes Judgment Under Uncertainty

Medicine is messy. Symptoms are vague. Patients have incomplete histories. Multiple diseases overlap. AI performs best when inputs are clean and standardized—but real clinics are not clean.

Doctors excel at:

• reasoning when data is missing,

• balancing multiple risks and outcomes,

• making decisions under uncertainty,

• adapting when the “protocol” does not fit the person.

2) Ethics, Consent, and Moral Responsibility

AI can output probabilities. It cannot carry moral responsibility.

Doctors must handle:

• end-of-life discussions,

• treatment refusals,

• consent and autonomy conflicts,

• cultural and religious sensitivity,

• balancing family pressures with patient rights.

No hospital wants a headline: “Algorithm decided the patient’s outcome.” Responsibility lands on humans.

3) Patient Trust and Communication

Trust is not just “explaining facts.” It’s emotional calibration. It’s reassurance. It’s adapting language to a patient’s fear level and education. It’s persuading a patient to follow treatment.

AI can provide information. But it cannot authentically bond with a patient or carry the human relationship that medicine depends on.

4) Physical Examination and Procedural Skill

Until robotics becomes universally deployed (and affordable), doctors will remain central for physical exams, procedures, and interventions. Even with robotic assistance, a surgeon is still responsible.

So… Will AI Replace Doctors’ Jobs?

AI can guide. It cannot fully replace tactile skill, real-time improvisation, and clinical instinct under pressure.

The honest answer is: AI will replace SOME job roles and SOME tasks, but it will mostly change doctors’ work rather than eliminate doctors.

Think of it like this:

• AI replaces repetitive, data-heavy tasks.

• Doctors shift toward complex decision-making, patient communication, and oversight.

• The number of doctors needed may not drop because healthcare demand is rising—what changes is productivity per doctor.

However, certain specialties and roles will be affected more than others.

Which Medical Specialties Are Most Exposed to Automation?

This table is not “doom.” It’s a strategic map for career planning.

| Specialty/Role | AI Exposure | Why | Human Moat |

| Radiology (routine reads) | High | Image pattern recognition is AI-friendly | Complex cases + clinical correlation + accountability |

| Pathology (screening) | High | Slide scanning + detection tasks | Final diagnosis + edge cases + lab integration |

| Dermatology (screening) | Medium-High | Visual triage via photos | Treatment planning + patient context + procedures |

| General practice | Medium | Triage + documentation automation | Trust, broad judgment, coordination of care |

| Emergency medicine | Medium | Risk scoring + triage tools | High-stakes decisions + human leadership |

| Surgery | Medium | Robotic assistance + planning | Procedural skill + accountability + improvisation |

| Psychiatry | Low-Medium | Chatbots support screening | Human connection + ethics + nuance |

The Business Truth: Why Hospitals Buy AI

Hospitals rarely buy AI because it’s “cool.” They buy it for ROI. The strongest buyer-intent reasons are:

• Reduce time-to-diagnosis (better outcomes and throughput)

• Reduce errors (lower liability and malpractice risk)

• Cut administrative cost (documentation + coding + scheduling)

• Improve revenue cycle (fewer denials, faster collections)

• Improve resource utilization (beds, imaging equipment, staff)

Medical AI Cost vs ROI: What Clinics Actually Evaluate

Use this buyer-intent table to structure your purchasing decision (or your affiliate comparisons).

| AI Category | Typical Cost Driver | Main ROI Benefit | Fastest Win |

| AI Scribe / Documentation | Per provider/month | Time saved + reduced burnout | Immediate (weeks) |

| Imaging AI | Per study or enterprise license | Faster triage + fewer misses | Medium (months) |

| Triage Chatbot | Platform subscription | Reduced call volume + better routing | Fast (weeks-months) |

| Revenue Cycle AI | Percent of savings or license | Fewer denials + improved cash flow | Fast (1–3 months) |

| Remote Monitoring AI | Per patient/month | Prevent admissions + proactive care | Medium (months) |

| Clinical Risk Scoring | Enterprise license | Predictive interventions | Medium (3–6 months) |

The Big Problem: Bias, Safety, and Hallucinations

If medical AI were perfect, the debate would be over. But there are real risks:

• Bias: Models trained on non-representative data can underperform for certain populations.

• Safety: A small error rate can be catastrophic in medicine.

• “Hallucinations”: Some AI systems can generate confident-sounding but incorrect outputs.

• Distribution shift: Models degrade when patient populations or equipment changes.

• Over-reliance: Clinicians may trust AI too much and reduce critical thinking.

Because of these risks, responsible deployment requires human oversight, auditing, and strong governance.

Legal Reality: Who Is Responsible When AI Is Wrong?

This is the most important reason AI will not replace doctors soon.

In most real healthcare systems, accountability still lands on humans:

• The clinician who signs the chart,

• The hospital that deploys the tool,

• The vendor that built the model (depending on contract and regulation).

In other words: AI can advise, but humans carry responsibility. That makes “full replacement” legally and ethically difficult.

Patient Psychology: Will People Accept an AI Doctor?

Most people want the best of both worlds:

• AI for speed and accuracy,

• doctors for trust and explanation.

Even if AI becomes extremely accurate, patients often need a human to:

• explain trade-offs,

• deliver bad news,

• motivate behavior change,

• handle fear and uncertainty.

Healthcare is not only science—it is relationship.

The Real Future: AI-Augmented Doctors

Instead of “AI replacing doctors,” the real shift is AI-augmented medicine:

AI does:

• pattern analysis,

• documentation support,

• risk scoring,

• operational optimization.

Doctors do:

• final judgment,

• ethics and consent,

• communication,

• responsibility and accountability.

This hybrid model increases productivity per doctor. The “winner doctor” is the one who becomes fluent in AI tools and uses them to save time and improve outcomes.

The Shocking Truth

Here’s the shocking truth that most headlines hide:

AI will not replace doctors.

AI will replace doctors who refuse to adapt.

Hospitals and clinics will reward professionals who can:

• use AI tools responsibly,

• interpret outputs critically,

• improve speed without losing safety,

• communicate clearly with patients.

So the question is not “Will AI take your job?”

The better question is: “Will you become the doctor who knows AI better than your colleagues?”

That shift creates career advantage, higher income potential, and better job security.

Action Plan: What to Do Next

If you are a doctor or medical student:

Step 1: Learn the basics of medical AI (limitations, bias, safety).

Step 2: Use AI to reduce paperwork (scribes, documentation tools).

Step 3: Develop strong communication skills (AI can’t replace trust).

Step 4: Build a specialty “moat” (procedures, complex reasoning, leadership).

Step 5: Stay updated on regulations and clinical governance.

If you are a clinic owner or hospital manager:

Step 1: Identify high-ROI use cases (documentation, billing, triage).

Step 2: Run a pilot program (limited scope, clear metrics).

Step 3: Measure outcomes (denials, time saved, throughput, safety signals).

Step 4: Train staff to avoid over-reliance and confirm governance.

Step 5: Scale gradually with auditing and security controls.

FAQ

Q: Can AI diagnose better than doctors?

A: In some narrow tasks (like image classification), AI can match specialists. But diagnosis in real life requires context, history, ethics, and accountability—so it’s not a full replacement.

Q: Which doctors should worry most?

A: Roles that are repetitive and data-heavy may see major workflow automation. But most doctors will see augmentation, not elimination.

Q: Can patients trust AI?

A: Patients tend to trust AI more when a human doctor supervises and explains the decision.

Q: Will AI reduce doctor salaries?

A: Not automatically. It may shift value toward doctors who can deliver higher productivity and better outcomes using AI tools.

Final Verdict (Buyer-Intent Summary)

AI will not replace doctors as a profession. It will replace inefficient tasks and reshape how care is delivered.

The opportunity is massive:

• Doctors who embrace AI will gain time, reduce burnout, and become more productive.

• Hospitals that deploy AI responsibly will reduce costs and improve outcomes.

• Patients will benefit when AI is used as decision support—not as a full replacement.

If you want to win in 2026 and beyond, don’t fear AI. Learn it, control it, and use it ethically.

That is the real future of medicine.

Deep Dive: 12 Buyer Questions to Ask Before Adopting Medical AI

Use these questions to separate real medical AI platforms from marketing hype.

1) What exact clinical or operational problem does the AI solve?

A platform should have a single measurable outcome, not vague promises.

2) What data does it require and where does that data live?

If data pipelines are weak, AI accuracy collapses.

3) Was the model validated in environments similar to your hospital or clinic?

Different populations, devices, and workflows can change performance.

4) How does the tool handle uncertainty?

Good systems show confidence scores, limitations, and when to escalate to a human.

5) What is the human-in-the-loop design?

AI must support clinicians, not override them.

6) How will errors be detected and reported?

You need an incident workflow, audit logs, and continuous monitoring.

7) Does it introduce bias or amplify existing health inequities?

Ask about fairness testing and subgroup performance.

8) What is the cybersecurity posture?

Look for encryption, access control, and vendor security documentation.

9) What are the legal terms and responsibility clauses?

Read liability language. Clarify accountability in writing.

10) What is the total cost of ownership (TCO) beyond the sticker price?

Include training, integration, monitoring, and change management.

11) How will staff be trained to use it safely?

Without training, the tool becomes risk, not benefit.

12) What are your success metrics?

Define measurable outcomes: time saved, denial rate, readmission rate, missed findings, throughput, patient satisfaction.

Case Studies: How AI Changes Real Clinical Workflows

Case Study 1: Radiology Triage for Stroke (CT/CTA)

In a busy emergency department, minutes matter. AI triage tools can flag suspected hemorrhage or large vessel occlusion, pushing the scan to the top of the reading queue. The radiologist still reads and signs the report, but the AI helps reduce time-to-notification. The economic impact is also real: faster stroke interventions can reduce ICU length of stay and improve outcomes, which affects hospital performance metrics.

Case Study 2: AI Scribe in a High-Volume Primary Care Clinic

A family clinic with 60–90 patient visits per day used AI note generation to cut documentation time. Providers spent less time after hours finishing charts, and visit notes became more consistent. The clinic tracked a drop in chart completion delays and fewer billing interruptions caused by missing documentation. The doctor remained responsible for reviewing and signing notes, but the AI functioned like a speed layer.

Case Study 3: Revenue Cycle AI for Denial Reduction

A small multi-specialty clinic struggled with denials related to missing modifiers and incomplete documentation. A revenue cycle AI tool flagged risk before submission and suggested documentation prompts. Over time, the clinic saw improved clean-claim rates. This is one of the fastest ROI use cases because cash flow improves quickly.

Case Study 4: Remote Monitoring for Chronic Disease

AI tools that detect anomalies in remote monitoring data (blood pressure, glucose, ECG patterns) can help teams intervene early. Doctors still decide treatment, but AI improves signal detection and prioritization. This is especially valuable when staff is limited and patient volumes are high.

Deep Dive: What AI Can Replace vs What It Cannot

To understand replacement realistically, split medical work into layers:

Layer A — Data Tasks (Most Automatable)

• Summarizing records, matching patterns, reading routine images, spotting anomalies, transcribing notes, suggesting codes, generating drafts.

Layer B — Decision Tasks (Partially Automatable)

• Risk scoring, treatment suggestions, guideline recommendations, triage routing. AI can support, but human oversight remains critical.

Layer C — Responsibility Tasks (Hard to Automate)

• Consent, ethics, accountability, explaining uncertainty, negotiating treatment goals, and handling rare edge cases.

Layer D — Human Relationship Tasks (Least Automatable)

• Building trust, handling fear, delivering bad news, motivating behavior change, and respecting cultural realities.

This layered model explains why “AI replacing doctors” is exaggerated. AI replaces Layer A heavily, supports Layer B, and barely touches Layer C and D.

The Hidden Risk: Automation Bias and Over-Trust

A major danger is that clinicians may trust AI too much. This is called automation bias.

It happens when a human stops thinking critically because “the system must be right.” In medicine, that can be deadly.

To prevent automation bias:

• Require humans to independently assess key findings.

• Use AI as a second opinion, not a first authority.

• Track when humans disagree with AI and why.

• Run regular audits on false positives and false negatives.

• Train clinicians on model limitations, not just features.

The best hospitals treat AI like a junior assistant: useful, fast, but not the boss.

Case Studies: How AI Changes Real Clinical Workflows

Case Study 1: Radiology Triage for Stroke (CT/CTA)

In a busy emergency department, minutes matter. AI triage tools can flag suspected hemorrhage or large vessel occlusion, pushing the scan to the top of the reading queue. The radiologist still reads and signs the report, but the AI helps reduce time-to-notification. The economic impact is also real: faster stroke interventions can reduce ICU length of stay and improve outcomes, which affects hospital performance metrics.

Case Study 2: AI Scribe in a High-Volume Primary Care Clinic

A family clinic with 60–90 patient visits per day used AI note generation to cut documentation time. Providers spent less time after hours finishing charts, and visit notes became more consistent. The clinic tracked a drop in chart completion delays and fewer billing interruptions caused by missing documentation. The doctor remained responsible for reviewing and signing notes, but the AI functioned like a speed layer.

Case Study 3: Revenue Cycle AI for Denial Reduction

A small multi-specialty clinic struggled with denials related to missing modifiers and incomplete documentation. A revenue cycle AI tool flagged risk before submission and suggested documentation prompts. Over time, the clinic saw improved clean-claim rates. This is one of the fastest ROI use cases because cash flow improves quickly.

Case Study 4: Remote Monitoring for Chronic Disease

AI tools that detect anomalies in remote monitoring data (blood pressure, glucose, ECG patterns) can help teams intervene early. Doctors still decide treatment, but AI improves signal detection and prioritization. This is especially valuable when staff is limited and patient volumes are high.

Deep Dive: What AI Can Replace vs What It Cannot

To understand replacement realistically, split medical work into layers:

Layer A — Data Tasks (Most Automatable)

• Summarizing records, matching patterns, reading routine images, spotting anomalies, transcribing notes, suggesting codes, generating drafts.

Layer B — Decision Tasks (Partially Automatable)

• Risk scoring, treatment suggestions, guideline recommendations, triage routing. AI can support, but human oversight remains critical.

Layer C — Responsibility Tasks (Hard to Automate)

• Consent, ethics, accountability, explaining uncertainty, negotiating treatment goals, and handling rare edge cases.

Layer D — Human Relationship Tasks (Least Automatable)

• Building trust, handling fear, delivering bad news, motivating behavior change, and respecting cultural realities.

This layered model explains why “AI replacing doctors” is exaggerated. AI replaces Layer A heavily, supports Layer B, and barely touches Layer C and D.

The Hidden Risk: Automation Bias and Over-Trust

A major danger is that clinicians may trust AI too much. This is called automation bias.

It happens when a human stops thinking critically because “the system must be right.” In medicine, that can be deadly.

To prevent automation bias:

• Require humans to independently assess key findings.

• Use AI as a second opinion, not a first authority.

• Track when humans disagree with AI and why.

• Run regular audits on false positives and false negatives.

• Train clinicians on model limitations, not just features.

The best hospitals treat AI like a junior assistant: useful, fast, but not the boss.

Final Conclusion: The Real Future of Doctors in the Age of AI

So, can AI replace doctors?

The honest answer is no — but it will absolutely transform what being a doctor means.

Artificial Intelligence is exceptionally powerful at analyzing data, detecting patterns, automating documentation, optimizing billing, and supporting diagnostics. In many narrow tasks, it already performs at or near specialist level. That reality cannot be ignored.